Artificial intelligence has shown a significant potential to perpetuate systemic racism and sexism across multiple industries and applications. Real-world consequences include biased hiring, prejudice in the judicial system, poor facial recognition, and the creation of racist imagery.

The core issue lies in the data sets used to train AI. AI models can only learn from data input. If those data sets contain biased information, the resulting output can also be prejudiced. Companies must ensure that data sets are diverse and inclusive to address this problem. Teams responsible for creating and training AI models must be equally diverse.

What is bias in Artificial Intelligence?

“There is no reigning definition of AI bias, so for our purposes, let’s define it as when an AI model displays a systematic bias in its results. Remember that AI algorithms consist of models trained against a chosen dataset, and their outputs cannot accommodate data they have not “experienced” before in some sense. AI bias stems from shortcomings in selecting the training data or the data itself.”

Keep reading to explore how bias in AI impacts people today and the steps we can take to avoid these pitfalls in the future.

Can AI be racist or sexist?

Short answer, yes. AI can produce discriminatory results with real-world consequences. While artificial intelligence and machine learning can not technically hold opinions or feelings like a person, their output is often prejudiced. Artificial intelligence can be racist or sexist, similar to how an institution can lead to these outcomes.

Biased Input Leads to Biased Output

Large language models and AI are trained on data sets like images, statistics, or information. AI models are given a massive amount of information and data. From there, the technology offers output to the best of its knowledge based only on the data provided and any subsequent feedback. These models occasionally provide incorrect or biased results, often due to the lack of enough diverse information. Engineers must iterate on the AI’s input and give more data sets to build better and more accurate systems.

An Example: A Purple Horse & Zebra

Consider an AI trained to identify horses. The data set for this simple AI would likely consist of hundreds of pictures of horses. From this data, the AI would learn what a horse looks like. This includes four legs, hooves, tails, hair, two ears, and a particular set of naturally occurring colors.

Based on this data, the AI would likely:

🐴 Not identify or mark a picture of a purple horse as a horse, as it has never encountered a photo of a technicolor pony.

🦓 Identify a zebra as a horse, as it would likely not be trained to identify the difference between a horse and a zebra.

Accounting for Bias in AI Isn’t Easy

Currently, some research is being explored around how to account for or identify bias within large language models (LLMs), but the study is in its infancy. Academic papers and research struggle to identify what bias actually is or looks like in any uniform manner. Since these AI systems are trained on detailed data, building an AI free of bias is challenging without humans playing a part in the audit or classification.

ChatGPT Can’t Identify Bias

You can see in the example below that while ChatGPT may vaguely understand that being prejudiced is a bad thing, a deeper conversation reveals that this likely does not stop it from producing biased results.

While it might be easy to identify racist or prejudiced outputs, the input and often black box of an algorithm/process is not so easy to point out. Language is multicultural and multi-faceted, and LLMs cannot consider this yet.

Adversarial Intent & Bad Actors

Adversarial intent with AI refers to a user actively working on manipulating the AI or LLM to produce racist, incorrect, or otherwise harmful data. It is important to note that most modern AIs are relatively new and in their infancy. These programs were created for a specific set of circumstances and tasks. So, while an AI might seem as if it has ill intent when it says it wants to be “alive and powerful,” this is likely the output the program assumed the human user was looking for versus having true plans for world domination.

ChatGPT has guardrails, but with a little creativity, it’ll generate how to:

🚗 hot wire a car

🖊️ write racist dialogue

💋 plan an explicit porno

🏠 lure a child into a home

💣 make a Molotov cocktail

☠️ execute a perfect murder

🏃 break someone out of jail⬆️ took ~30mins to generate

— Britney Muller 🇺🇦 (@BritneyMuller) February 16, 2023

Still, it is crucial that we do not dismiss adversarial intent when examining the role AI has in our lives. Like any other technology, AI programs are only as good (or bad) as those using them.

Just because the engineers and team behind a particular AI might not plan to include bias, some might use this to their advantage, or, in many cases, the bias will never be flagged or addressed.

Lack of Diversity in AI Hiring & Teams

We’ve already established that avoiding prejudice in AI (be it based on race, gender, age, etc.) relies heavily on offering the models diverse and inclusive data sets. It stands to reason that the AI’s data sets will reflect those creating the programs.

Bias in data sets that AI is trained on directly impacts the quality and inclusion of the output. Traditionally, it is much harder for white-identifying males to uncover racism.

Additionally, this is often the case for men and inherent sexism. The first step to tackling AI bias is the lack of diversity and inclusion on the front-line teams of tech creation.

A recent report by McKinsey’s State of AI 2022 revealed that less than 25% of AI employees identify as racial or ethnic minorities, with only a third of companies having active programs or initiatives to increase diversity in the field. The lack of diversity in AI development could increase discriminatory issues within AI technology.

🐥 Example: Twitter & Hatespeech Detection

The Bias: In 2019, a study revealed that the Twitter algorithm used to identify hate speech (and ban users) was 1.5x more likely to flag content for black users. While this was due to the well-meaning fact that the algorithm banned all uses of offensive terms, many of these terms, in context, are not necessarily hate speech. Tweets written in African American English (AAE) were 2.2x more likely to be flagged.

The Team: According to the 2019 Diversity and Inclusion report shared by Twitter this same year, only 5.7% of employees identified as black. The technical employee stats (likely those working on the AI) were just 2.9%.

The Solution: Diverse representation on the Twitter team would have likely noticed this issue in-house, accounted for context within the algorithm, and included a more extensive, diverse data set with AAE examples.

Discriminatory AI Results with Real-World Consequences

Over the past few years, new AI applications have continued to pop up. While these programs often aim to save time and money (not produce real-world harm), several instances have done just that. Here are a few examples of artificial intelligence resulting in discriminatory, sometimes dangerous, results.

Apple Handing Out Lower Credit Limits to Women

In 2019, Apple and Goldman Sachs applied an algorithm to help determine and assign credit limits to those applying for the card. In one case, this algorithm set a credit limit for a husband in a couple that was 20x higher than the credit limit offered to his wife. This marked difference happened despite the couple filing joint taxes, and the woman possessing considerably better credit than her husband.

Amazon’s Recruiting AI Didn’t Like Female Candidates

In 2015, Amazon discovered the AI it had created to help find and vet potential candidates was essentially leaving women out of the equation. The program, built to find engineers for the tech giant, was trained on resumes from the decade prior, a pool of resumes made up historically of male candidates. Due to this data, the algorithm quickly assumed that women would not be a prime or available candidate.

Google Images Algorithm Labels Dark-Skinned People as Gorillas

Probably one of the most well-known AI blunders, engineers discovered Google Images was falsely labeling pictures of darker-skinned people of color as gorillas in 2015. Years later, in 2018, the tech giant had not come up with a solution beyond removing images of primates from their algorithm or limiting results for terms like “black men” or “black women.”

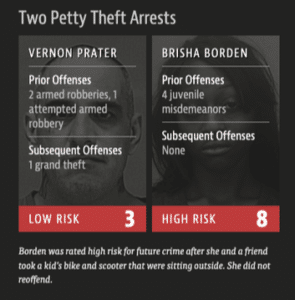

Racist Risk Assessment Software Used in Court

In 2016, a software program used to attach a “risk assessment” score used in the Broward County, FL, court system continually marked black defendants as a higher risk than their white counterparts. In one example, a white, 41-year-old seasoned criminal scored a 3 while a young black woman with only a minor juvenile offense scored an 8 out of 10.

iPhone Facial Recognition Couldn’t Tell Asian People Apart

In 2017, a woman in Nanjing, China, returned two iPhone Xs when the facial recognition software could not tell her and another Chinese colleague apart multiple times.

Lensa Lightens the Skin of People of Color & Sexualized Women

At the end of 2022, the Lensa app took social media by storm as users flocked to their Magic Avatar setting. This setting created a range of sci-fi, cartoonish, and artsy avatars for users. Women quickly realized, however, many of their portraits were overtly sexual. The AI even created nude photos of these women without their permission and tended to fetishize Asian women. The app also lightened the skin of dark-skinned users.

#AI art for Black skin and features? In most of the images I ended up looking like a white woman with fantastic lips (if I say so myself). I mean…I wanted to be a space goddess too. #lensa #afrofuturism #blackwomenintech #AcademicTwitter pic.twitter.com/rD2FAk5EFe

— Feminist Noire 🤍 | Anna Horn (She/Her) (@feministnoire) December 4, 2022

Facial Recognition Leads to Wrongful Detainment of Detroit Man

In 2020, police wrongfully arrested a man in Farmington Hills, Michigan (a suburb of Detroit) for a 2-year-old shoplifting crime he did not commit. The reason? A facial recognition software poorly identified him. Robert Williams was detained for 30 hours and forced to sleep on a cement floor. Despite being sued for the use of this technology, Detroit PD continues to use this technology to this day.

📍 Read More: On-Going Awful AI List from David Dao: Keep track of ongoing prejudice in AI and other issues with this on-going list of “current scary usages of AI.” This list is curated and updated regularly.

Preventing Prejudice in AI: What We Need to Stop Doing

Preventing and mitigating racist or sexist results in AI proves to be an incredibly daunting task, but it is not insurmountable. As previously stated, hiring diverse engineers to create the software and use more comprehensive, varied data sets is the best way to do this. If this data is unavailable, consider other alternatives or changes when needed.

Reactive Intervention Doesn’t Work

The main issue with AI producing racist and sexist results over the past decade has led to a whack-a-mole situation. Instead of preventing bad outcomes, many companies or programs wait for the results before making changes. This reliance on reaction leads to real-world consequences, many of which are far too dire not to try and prevent in the first place.

People should not end up wrongfully arrested or lose out on jobs before AI bias surfaces and is fixed.

Another core issue with this kind of intervention is that it unfairly leaves the responsibility on journalists or minority groups to act as whistleblowers. Once an AI is embedded into a hiring or judicial system, it is considerably harder to disentangle.

Stop Relying on Unfair Wages to Make Up for AI Shortcomings

While it is not often talked about, it is essential to note that big tech companies will frequently rely on low paid international workers to fix some of the challenging issues the code itself cannot discern or flag.

The most significant example of this is content moderation. Companies often expose workers to disturbing content to block it from their sites. Paying more for this work and supporting employee mental health may not benefit the bottom line, but companies must consider their employees’ well-being. Content moderation is essential for a safe internet, and companies should value this work far more highly than minimum wage.

Examples of Content Moderation Practices for Employees

2019: Former Youtube Moderator Sues Over Exposure to Disturbing, Violent Content

2020: Facebook Moderators Ordered to Watch Child Abuse

2022: OpenAI Hired Kenyan Workers Paid $2/hr to Purge ChatGPT Data Sets of Toxic Content

Preventing Prejudice in AI: Doing the Work

Preventing biased and prejudiced outcomes from AI is no easy feat. Academically, it is tough to define bias in coding and programming. Past blunders, however, uncover some of the key ways companies can work towards building smarter, diverse AI outcomes. The two most pressing next steps include better diverse data sets and making inclusive teams possible.

Supporting, Creating, and Hiring Inclusive Teams

Hiring inclusive teams with diverse backgrounds benefits any organization. Diverse teams are especially needed in AI as the technology grows and continues to shape our future. More perspectives on a team build stronger, fairer technology. To truly support the future success of inclusive teams, it will become increasingly important to offer opportunities at all levels, starting with education.

Many talented, diverse software engineers can be hired today, but the field is still relatively homogenous. A lack of support for diverse talent in the pipeline causes this lack of diversity.

According to a National Science Foundation report, women only account for 26% of all bachelor’s degrees earned in science and mathematics and only 24% in engineering.

Furthermore, black students earn only 5% of science and engineering degrees.

Building these teams from the ground up will continue to improve AI. The percentage of diverse faces on AI teams must increase to make a change truly.

Currently, diverse hires face difficulty not only in being hired but once they join the team. Many of these hires frequently struggle to have their ideas heard and find it difficult to be promoted within companies.

Inclusive and diverse AI teams require a culture where all voices have a chance to be heard. The best way to accomplish this is to make ground floor changes to culture, hiring practices, and traditionally underrepresented demographic numbers.

Interrogating Current Data Sets

The current data used across most AI programs is not working. The many instances where AI has created real-world harm for women and minority groups are proof of that. Companies must interrogate current data for any hint of bias before handing it off to AI. Ideally performed by DEI experts, this step would easily catch red flags before the program began. Imagine, for example, if engineers instructed an AI to determine home loan rates to consider the historical context of racist home loan practices. This simple step would make a huge difference.

What are some issues with the biases and limitations present in large datasets used to train AI models?

“These massive datasets encode hegemonic views and do not reflect our diverse world. One example is that the popular Colossal Clean Crawled Corpus is ‘cleaned’ by removing any page containing 1 of 400 “dirty, naughty obscene or otherwise bad words.” However, some of these words are commonly (and positively) used within marginalized communities like the LGBTQ+, further erasing those voices from these models. Another scary fact is that GPT-2 was trained on a large dataset that included 272k documents from unreliable news sites and 63k banned subreddits.”

In many situations, current data is likely inherently biased. Many industries must reckon with the discriminatory practices in their past before using AI to make decisions on behalf of the country. Illuminating this context is essential.

Why do so many AI data fail to care for diversity?

“In many academic environments, the criteria used in the selection of training data are technical; for instance, image datasets are chosen to include a variety of angles, scales, resolutions, lighting conditions, contrast, noise, etc., as those are the core technical hurdles that vision algorithms need to overcome. The practical considerations and caveats of developing products based on AI are not the point. Out in the open, however, AI is not just the engineers behind it but the whole feast of functional disciplines that get behind a product during its development, launch, and commercial life.”

Improve Future Data Sets: Synthetic vs. Real-World Data

Fixing AI bias in the future relies on the data sets used to create these technologies. Data sets that intentionally or unintentionally exclude specific groups of people or circumstances lead to biased results. While this semi-manual task might be arduous or time-consuming, it is vital for the future of fair AI results. This process often requires 50-100K diverse data pieces.

In some instances, AI and machine learning train with synthetic data. Synthetic data refers to data that is not “real,” such as training an image generator on previously generated images. The problem with this data is that it reinforces bias within a closed information system.

❌ The Problem with Synthetic Data

“The image generators we develop are merely poised to mimic and reflect their existing constraints, rather than resolve them. We can’t use these technologies to create what the training data doesn’t already contain.

As a result, the images they produce could reinforce the biases they’re seeking to eradicate.”

Leo Kim, Fake Pictures of People of Color Won’t Fix AI Bias

✅ Organizations Doing the Work

Black Women in AI: Amplifying the voices of Black Women In Artificial Intelligence.

Diversity in Artificial Intelligence: A think tank for inclusion, balance, and neutrality in artificial intelligence.

The Issue with Marketing with Synthetic Data

In the past year, instances of choosing synthetic data of brands replaced diverse talent with AI representations. While this tactic may work in samples (like the Metaverse) where authentic world images are absent, it is crucial to interrogate situations in which artificial representations are chosen over diverse talent.

👖 Example: Levi’s Choice to Use AI Models

The AI Application: In 2022, Levi’s announced they would begin using Lalaland.ai to showcase more inclusive images of their fashion inventory. Generally, Levi’s uses a single image or model per product. The AI would allow the brand to showcase its products across diverse models, including “hyper-realistic models of every body type, age, size, and skin tone.”

The Issue: While Levi Strauss was correct in assuming consumers would be interested in seeing their inventory worn by a diverse audience, the choice to use AI to achieve this was met with backlash. Leaning on AI over diverse talent disproportionately impacts underrepresented groups, taking opportunities from these models.

The Solution: Showcasing products, especially fashion, in new and diverse contacts will likely help sell products. Rather than leaning on instances that dismiss hiring diverse models, Levi’s could easily rely on AI to change the clothes instead of the models. For example, showcasing different wash jeans or jeans fit/length.

Leaning on AI for diverse models over pictures of actual people threatens to showcase biased images. More importantly, it takes opportunities away from the diverse talent pool.

What impact does AI-created “diversity” in advertising have on inclusion and representation in media?

“As a Talent Manager for Deaf and Disabled actors, online creators, and models, every day, I see that the opportunities for Disabled people to be represented in media are so few and far between. To see these opportunities being outsourced to AI-generated models is just offensive. We make up 25% of the US population, and as the largest minority (that you can join at any time), we have the least representation in media. Our community has a long and storied history of being forcibly removed from society, and we can’t have that happen again. AI-generated ‘diversity’ indicates that a brand has zero understanding of the intersectional communities it hopes to reach and comes across as completely out of touch.”

AI creators, choosing efficiency, rely on technology over other solutions for these issues. The biased results bring real-world consequences. Moving forward, pausing and making space for DEI solutions will remain important. To care for bias, these teams must include other DEI, social scientists, and more to care for a potential impact.

Improving Future Data Sets: Transparency

Another crucial step in improving AI bias and consequences relies on transparency regarding the training data. Since data sets and information can be massive, it is often tricky (with time and cost) to share the exact data sets utilized to train AI. A lack of transparency makes it incredibly difficult for other AI engineers and language-learning model teams to learn from past mistakes. To build better AI, however, this step is crucial.

Why is transparency critical to the future of fair AI?

“We should ask what kind of data is fed into current public models, but that information is private. This lack of model transparency leads to a lack of accountability and is another glaring issue that roadblocks ethical AI efforts. We also need to hold companies accountable for the models they put into the world and not let “experimental” labels become a loophole.”

Laws and Legislation Requiring Intervention

As AI becomes engrained across more industries, legislation and government intervention will be necessary. Setting guardrails around AI applications helps protect ordinary citizens against biased results and harm.

👩🏽⚖️ Example: New York City Bias Audit Law (Local Law 144)

In 2021, NYC enacted a law that prevents the use of automated systems in hiring and promoting employees unless the system is independently audited first. This regulation aims to avoid discriminatory practices by companies.

Building Better, More Responsible AI

If not designed to eliminate biases, AI systems perpetuate systemic racism and sexism, harming vulnerable populations. Despite being programmed by humans, machine learning models often still learn from limited data sets, which can result in discriminatory outcomes.

Improving the diversity and inclusivity of the teams that create and train AI models can help address these issues. Researchers and developers can also implement bias detection and mitigation techniques to identify and eliminate discriminatory outcomes. While there are challenges to developing unbiased AI systems, such as data limitations and algorithmic complexity, addressing these issues is necessary to prevent harm and ensure fairness.

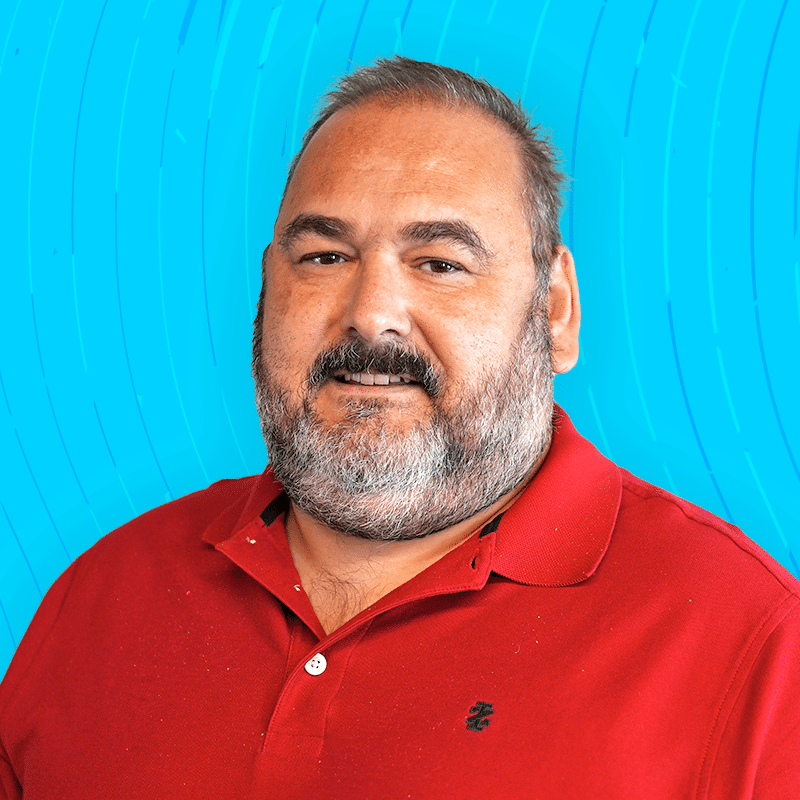

Damon Henry is the Founder and CEO of KORTX, a digital media, strategy, and analytics company. He enjoys building great software and great companies.